I usually don't write about US politics on this blog but sometimes it helps illustrate some interesting points about the role of science in public life.

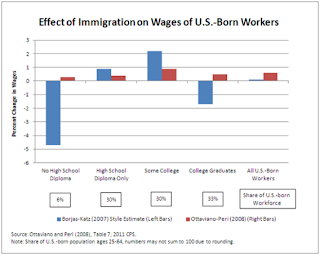

Immigration reform is now on the agenda of the US Congress. In response, Matt Yglesias (using a chart that illutrates the results of two econometric analyses, see above) writes today that:

Something similar happened a few days ago but in a different context. Blogger wunderkind Ross Douthat wrote an op-ed in the Times arguing that that the United States had low fertility rates, which he argued was a problem that the Government needed to think about and perhaps mitigate using policy measures (primarily by creating a "more secure economic foundation" for working-class Americans). He concluded--omniously--by saying that policy measures could only be effective to a point; low fertility rates were a symptom of "decadence":

All of which makes me thing that Porter is right on this. Numbers are indeed "technologies of trust." At least in the American context, they allow arguments about public life to be made; they make possible arguments that are about objective "facts" rather than subjective "values." Not that arguments about facts are guaranteed to be settled, but arguments can indeed be made. Whereas arguments over whether the late modern age is "decadent" or not, between people with incommensurate values, are guaranteed to go nowhere.

Which brings me back to the original post I began this discussion with where Yglesias suggests that it is better to have a national American conversation about the cultural anxieties of immigration (I suspect it would turn out to be equally "nutty"). Kevin Drum responds:

Immigration reform is now on the agenda of the US Congress. In response, Matt Yglesias (using a chart that illutrates the results of two econometric analyses, see above) writes today that:

Unfortunately, immigration scolds seem to be excessively afraid to voice their real concerns about this, which makes it difficult to address them with either evidence or policy concessions. Instead, we're stuck in a mostly phony argument about wages that does nothing to ease people's real fears about nationalism and identity.A set of interesting claims is being put forward here, and if I understand it right, a rather strange use of Marx's ideas about the base and superstructure. For Marx, the base was economic relations and the relationship of the different groups of people to the means of production. The superstructure was culture; this was seen as deriving from the economic structure, and often served to reproduce this base (through what Marx called false consciousness and ideology). Implicit in Yglesias' assertion is that the effect of immigration on wages uncovered by econometricians corresponds in some sense to what a certain set of Americans feels. A cumulatively economic effect of immigration on wages that can be uncovered only through the efforts of econometricians is seen as an objective measure (in Theodore Porter's sense) that maps onto the subjective states of people who oppose immigration. Unfortunately, the econometricians find low or negligible effect, therefore people who oppose immigration must be lying: their reasons are cultural--about nationalism and identity--rather than economic. We would have a better, more honest discussion, he suggests, if this sham of economic impact (expressed through numbers) was let go of and concentrated on the cultural fears.

Something similar happened a few days ago but in a different context. Blogger wunderkind Ross Douthat wrote an op-ed in the Times arguing that that the United States had low fertility rates, which he argued was a problem that the Government needed to think about and perhaps mitigate using policy measures (primarily by creating a "more secure economic foundation" for working-class Americans). He concluded--omniously--by saying that policy measures could only be effective to a point; low fertility rates were a symptom of "decadence":

The retreat from child rearing is, at some level, a symptom of late-modern exhaustion — a decadence that first arose in the West but now haunts rich societies around the globe. It’s a spirit that privileges the present over the future, chooses stagnation over innovation, prefers what already exists over what might be. It embraces the comforts and pleasures of modernity, while shrugging off the basic sacrifices that built our civilization in the first place.To which Matt responded, (calling this last paragraph "nutty")

Such decadence need not be permanent, but neither can it be undone by political willpower alone. It can only be reversed by the slow accumulation of individual choices, which is how all social and cultural recoveries are ultimately made.

It'd be a much better country if social conservatives would stop writing things like that second paragraph and focus instead on what's in the first paragraph.There was some predictable back-and-forth (see also this). But his point was clear: it was far better to talk about policy levers--about which a debate can be had--than about notions of "decadence" about which debates are never possible, particularly if you don't subscribe to such notions.

All of which makes me thing that Porter is right on this. Numbers are indeed "technologies of trust." At least in the American context, they allow arguments about public life to be made; they make possible arguments that are about objective "facts" rather than subjective "values." Not that arguments about facts are guaranteed to be settled, but arguments can indeed be made. Whereas arguments over whether the late modern age is "decadent" or not, between people with incommensurate values, are guaranteed to go nowhere.

Which brings me back to the original post I began this discussion with where Yglesias suggests that it is better to have a national American conversation about the cultural anxieties of immigration (I suspect it would turn out to be equally "nutty"). Kevin Drum responds:

Cultural insecurity and language angst are the key issues here. It doesn't matter if they're rational or not. Anything we can do to relieve those anxieties helps the cause of comprehensive immigration reform.A public conversation to relieve cultural anxieties--would it have to take the form of numbers? What other forms could it take--and where would it lead?